Interactive 3D Display system (“幻影”显示系统)

|

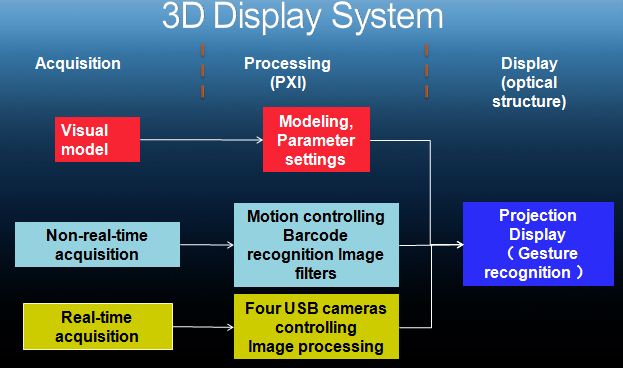

While Hollywood blockbuster 3D movie “Avatar” brought sensational experience and wonders to audience, we have begun to have brainstorm for a 3D display technology that can be viewed by audience without glasses. This project integrated optical and electronic machinery mechanics with interactive 360°stereoscopic display technologies. Virtual instruments PXI image acquisition, motion control and signal processing integrated technologies were employed in the creation of “Stereoscopic Display System,” a low cost, interactive 360° stereoscopic display design.

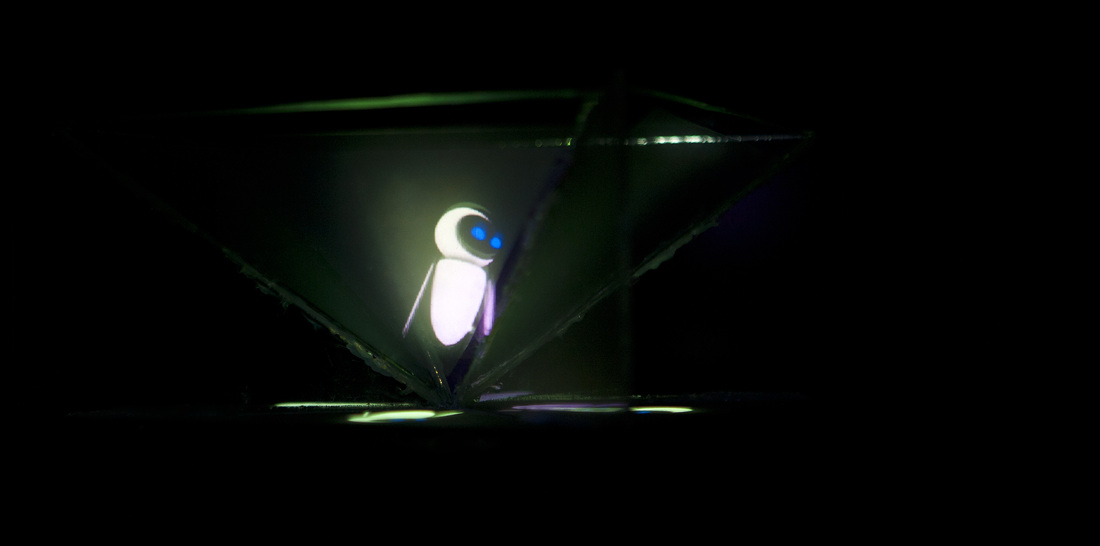

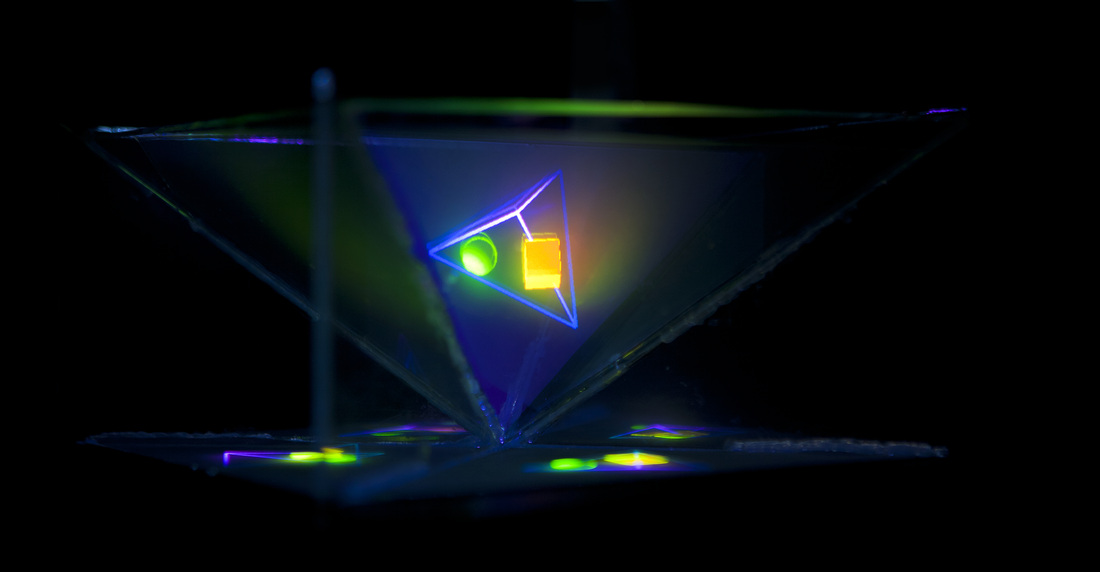

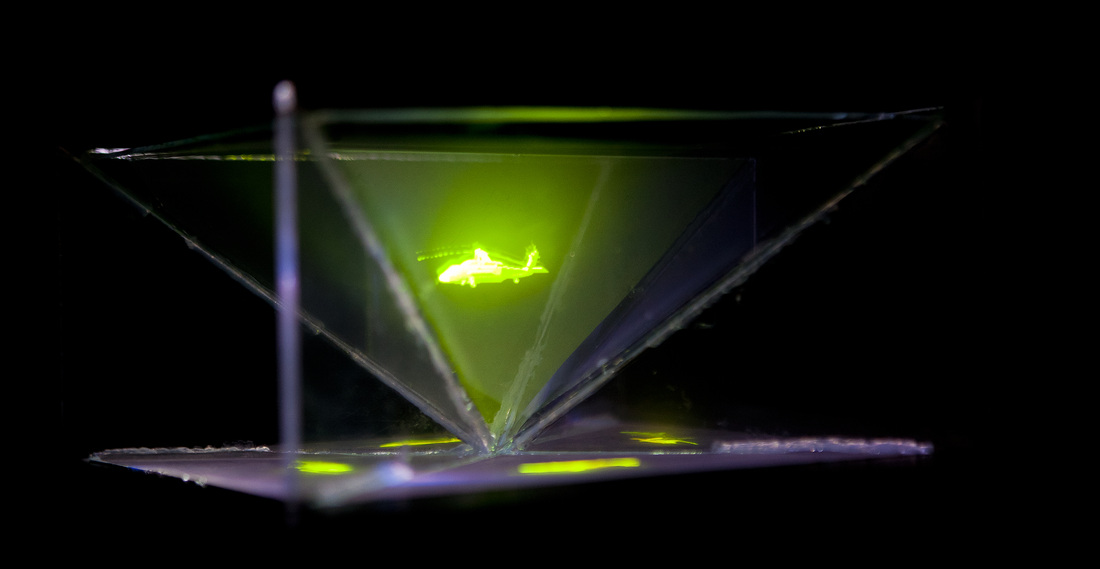

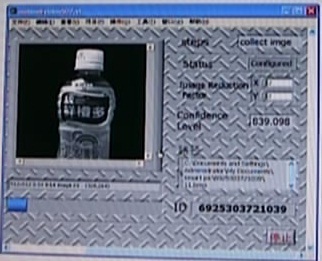

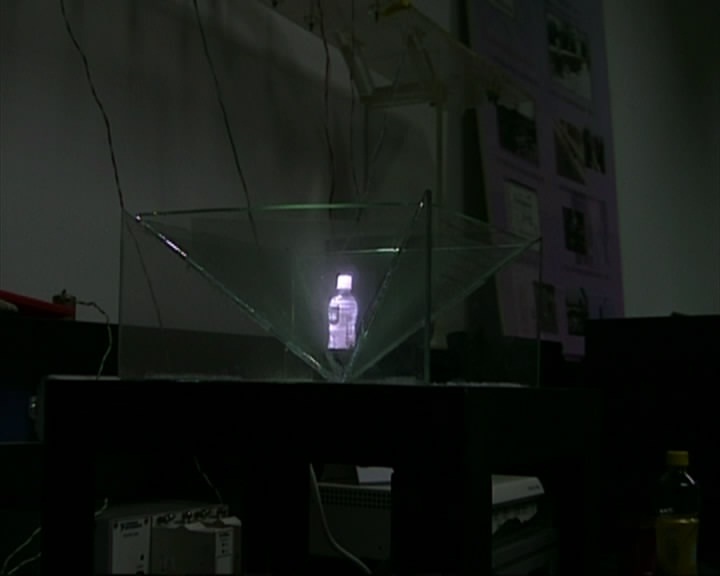

This display system uses four pieces of glass covered with optical film in an inverted pyramid configuration. A standard VGA projector reflected underneath the display shines images on the all four sides that bounce out to your eye, so the light appears to be coming from inside the pyramid. The display is relatively simple, the real challenge is creating source images of four different perspectives of an object. The first input method we created uses 3D models of objects. In this case we are using a LabVIEW 3D Picture Control to create a sphere and wrap it with a jpeg image. Once we set up the model we just need to create four picture controls on our front panel and set them to four different camera angles. Then we project the LabVIEW front panel onto our screen, and now we get to see that the Earth looks like floating in the space. Displaying 3D models was nice but we wanted to be able to display real objects as well. In order to do this we would need to capture many sides of an object. We decided to use a rotating platform and a camera to capture the images. Besides this, we also wanted to do live image capture. We mounted them in this box at the appropriate dimensions for the display and then we can display live images that appear to float in space. At the same time, we also built the human gesture recognition part to allow users to control the display content by waving their hands. You can simply control the display object became larger or smaller by moving your two hands. We used a normal USB camera to realize this part. The USB camera could capture the movement of human hands and realized control by the object centre we found through the image. |

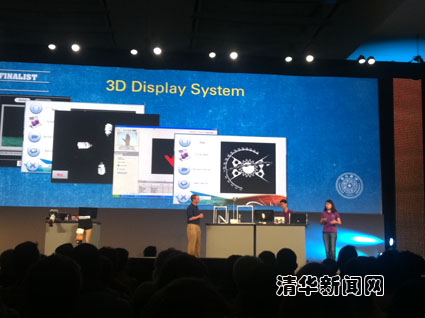

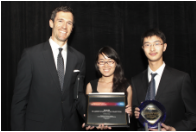

Awarding

We won the first Chinese National Virtual Instrument Competition and Global LabVIEW Student Design Competition and gave a keynote speech on 2011 NIWeek. You can download the poster here and find more details in the following video.